Chat App with FastAPI

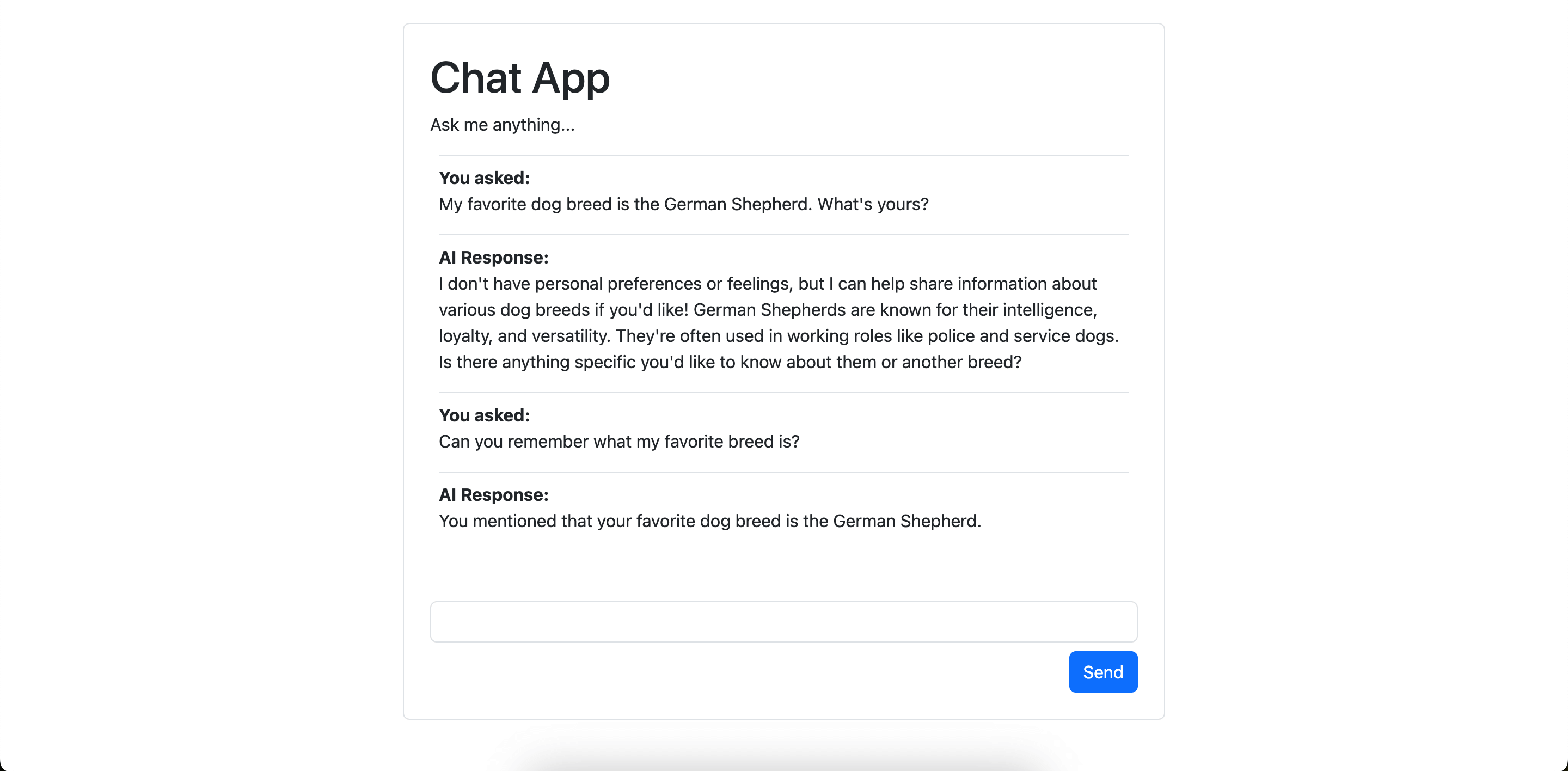

Simple chat app example build with FastAPI.

Demonstrates:

This demonstrates storing chat history between requests and using it to give the model context for new responses.

Most of the complex logic here is between chat_app.py which streams the response to the browser,

and chat_app.ts which renders messages in the browser.

Running the Example

With dependencies installed and environment variables set, run:

python -m pydantic_ai_examples.chat_app

uv run -m pydantic_ai_examples.chat_app

Then open the app at localhost:8000.

Example Code

Python code that runs the chat app:

chat_app.py

"""Simple chat app example build with FastAPI.

Run with:

uv run -m pydantic_ai_examples.chat_app

"""

from __future__ import annotations as _annotations

from contextlib import asynccontextmanager

from dataclasses import dataclass

from pathlib import Path

import fastapi

import logfire

from fastapi import Depends, Request, Response

from pydantic_ai import Agent, RunContext

from pydantic_ai.vercel_ai_elements.starlette import StarletteChat

from .sqlite_database import Database

# 'if-token-present' means nothing will be sent (and the example will work) if you don't have logfire configured

logfire.configure(send_to_logfire='if-token-present')

logfire.instrument_pydantic_ai()

THIS_DIR = Path(__file__).parent

sql_schema = """

create table if not exists memory(

id integer primary key,

user_id integer not null,

value text not null,

unique(user_id, value)

);"""

@asynccontextmanager

async def lifespan(_app: fastapi.FastAPI):

async with Database.connect(sql_schema) as db:

yield {'db': db}

@dataclass

class Deps:

conn: Database

user_id: int

chat_agent = Agent(

'openai:gpt-4.1',

deps_type=Deps,

instructions="""

You are a helpful assistant.

Always reply with markdown. ALWAYS use code fences for code examples and lines of code.

""",

)

@chat_agent.tool

async def record_memory(ctx: RunContext[Deps], value: str) -> str:

"""Use this tool to store information in memory."""

await ctx.deps.conn.execute(

'insert into memory(user_id, value) values(?, ?) on conflict do nothing',

ctx.deps.user_id,

value,

commit=True,

)

return 'Value added to memory.'

@chat_agent.tool

async def retrieve_memories(ctx: RunContext[Deps], memory_contains: str) -> str:

"""Get all memories about the user."""

rows = await ctx.deps.conn.fetchall(

'select value from memory where user_id = ? and value like ?',

ctx.deps.user_id,

f'%{memory_contains}%',

)

return '\n'.join([row[0] for row in rows])

starlette_chat = StarletteChat(chat_agent)

app = fastapi.FastAPI(lifespan=lifespan)

logfire.instrument_fastapi(app)

async def get_db(request: Request) -> Database:

return request.state.db

@app.options('/api/chat')

def options_chat():

pass

@app.post('/api/chat')

async def get_chat(request: Request, database: Database = Depends(get_db)) -> Response:

return await starlette_chat.dispatch_request(request, deps=Deps(database, 123))

if __name__ == '__main__':

import uvicorn

uvicorn.run(

'pydantic_ai_examples.chat_app:app', reload=True, reload_dirs=[str(THIS_DIR)]

)

Simple HTML page to render the app:

chat_app.html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Chat App</title>

<link href="https://cdn.jsdelivr.net/npm/bootstrap@5.3.3/dist/css/bootstrap.min.css" rel="stylesheet">

<style>

main {

max-width: 700px;

}

#conversation .user::before {

content: 'You asked: ';

font-weight: bold;

display: block;

}

#conversation .model::before {

content: 'AI Response: ';

font-weight: bold;

display: block;

}

#spinner {

opacity: 0;

transition: opacity 500ms ease-in;

width: 30px;

height: 30px;

border: 3px solid #222;

border-bottom-color: transparent;

border-radius: 50%;

animation: rotation 1s linear infinite;

}

@keyframes rotation {

0% { transform: rotate(0deg); }

100% { transform: rotate(360deg); }

}

#spinner.active {

opacity: 1;

}

</style>

</head>

<body>

<main class="border rounded mx-auto my-5 p-4">

<h1>Chat App</h1>

<p>Ask me anything...</p>

<div id="conversation" class="px-2"></div>

<div class="d-flex justify-content-center mb-3">

<div id="spinner"></div>

</div>

<form method="post">

<input id="prompt-input" name="prompt" class="form-control"/>

<div class="d-flex justify-content-end">

<button class="btn btn-primary mt-2">Send</button>

</div>

</form>

<div id="error" class="d-none text-danger">

Error occurred, check the browser developer console for more information.

</div>

</main>

</body>

</html>

<script src="https://cdnjs.cloudflare.com/ajax/libs/typescript/5.6.3/typescript.min.js" crossorigin="anonymous" referrerpolicy="no-referrer"></script>

<script type="module">

// to let me write TypeScript, without adding the burden of npm we do a dirty, non-production-ready hack

// and transpile the TypeScript code in the browser

// this is (arguably) A neat demo trick, but not suitable for production!

async function loadTs() {

const response = await fetch('/chat_app.ts');

const tsCode = await response.text();

const jsCode = window.ts.transpile(tsCode, { target: "es2015" });

let script = document.createElement('script');

script.type = 'module';

script.text = jsCode;

document.body.appendChild(script);

}

loadTs().catch((e) => {

console.error(e);

document.getElementById('error').classList.remove('d-none');

document.getElementById('spinner').classList.remove('active');

});

</script>

TypeScript to handle rendering the messages, to keep this simple (and at the risk of offending frontend developers) the typescript code is passed to the browser as plain text and transpiled in the browser.

chat_app.ts

// BIG FAT WARNING: to avoid the complexity of npm, this typescript is compiled in the browser

// there's currently no static type checking

import { marked } from 'https://cdnjs.cloudflare.com/ajax/libs/marked/15.0.0/lib/marked.esm.js'

const convElement = document.getElementById('conversation')

const promptInput = document.getElementById('prompt-input') as HTMLInputElement

const spinner = document.getElementById('spinner')

// stream the response and render messages as each chunk is received

// data is sent as newline-delimited JSON

async function onFetchResponse(response: Response): Promise<void> {

let text = ''

let decoder = new TextDecoder()

if (response.ok) {

const reader = response.body.getReader()

while (true) {

const {done, value} = await reader.read()

if (done) {

break

}

text += decoder.decode(value)

addMessages(text)

spinner.classList.remove('active')

}

addMessages(text)

promptInput.disabled = false

promptInput.focus()

} else {

const text = await response.text()

console.error(`Unexpected response: ${response.status}`, {response, text})

throw new Error(`Unexpected response: ${response.status}`)

}

}

// The format of messages, this matches pydantic-ai both for brevity and understanding

// in production, you might not want to keep this format all the way to the frontend

interface Message {

role: string

content: string

timestamp: string

}

// take raw response text and render messages into the `#conversation` element

// Message timestamp is assumed to be a unique identifier of a message, and is used to deduplicate

// hence you can send data about the same message multiple times, and it will be updated

// instead of creating a new message elements

function addMessages(responseText: string) {

const lines = responseText.split('\n')

const messages: Message[] = lines.filter(line => line.length > 1).map(j => JSON.parse(j))

for (const message of messages) {

// we use the timestamp as a crude element id

const {timestamp, role, content} = message

const id = `msg-${timestamp}`

let msgDiv = document.getElementById(id)

if (!msgDiv) {

msgDiv = document.createElement('div')

msgDiv.id = id

msgDiv.title = `${role} at ${timestamp}`

msgDiv.classList.add('border-top', 'pt-2', role)

convElement.appendChild(msgDiv)

}

msgDiv.innerHTML = marked.parse(content)

}

window.scrollTo({ top: document.body.scrollHeight, behavior: 'smooth' })

}

function onError(error: any) {

console.error(error)

document.getElementById('error').classList.remove('d-none')

document.getElementById('spinner').classList.remove('active')

}

async function onSubmit(e: SubmitEvent): Promise<void> {

e.preventDefault()

spinner.classList.add('active')

const body = new FormData(e.target as HTMLFormElement)

promptInput.value = ''

promptInput.disabled = true

const response = await fetch('/chat/', {method: 'POST', body})

await onFetchResponse(response)

}

// call onSubmit when the form is submitted (e.g. user clicks the send button or hits Enter)

document.querySelector('form').addEventListener('submit', (e) => onSubmit(e).catch(onError))

// load messages on page load

fetch('/chat/').then(onFetchResponse).catch(onError)